- Failory

- Posts

- 4 Years in Limbo

4 Years in Limbo

How the FDA limbo crushed a $28M startup.

Hey - It’s Nico.

Welcome to another Failory edition. This issue takes 5 minutes to read.

If you only have one, here are the 3 most important things:

Kintsugi, a startup building an AI depression detector, has shut down — learn more below

Perplexity Computer is the new OpenClaw - learn more below

A huge thanks to today’s sponsor, Oceans. Hire U.S.-caliber finance talent at up to 80% less cost with their help.

Hire U.S. caliber Finance talent for up to 80% less AD

Skip partial finance support. You need someone who closes the books, tracks cash weekly, and makes sure there are no surprises.

Your baseline requirements:

On-time month-end close

Weekly cash and runway tracking

A forecast you can run the business on

Oceans Talent places finance pros who think ahead, reconcile accounts, manage cashflow, and stay ahead of reporting deadlines.

400+ founders have hired Accountant, Financial Controllers, FP&A Analysts, and more with Oceans Talent.

This Week In Startups

🔗 Resources

Becoming an AI-native operator.

How to describe your product (quickly).

How AI agents actually work (and how to build one), live *

📰 News

Anthropic adds plug-ins for finance, engineering and design.

Guide Labs debuts a new kind of interpretable LLM.

Google’s new Gemini Pro model has record benchmark scores.

Meta’s metaverse leaves virtual reality.

💸 Fundraising

Nvidia challenger AI chip startup MatX raised $500M.

Wayve raises $1.2B to scale embodied AI for autonomous driving.

Music startup Mogul raises $5M.

AI marketing startup Gushwork raises $9M.

* sponsored

Fail(St)ory

Listening between the lines

Kintsugi shut down after nearly four years chasing FDA clearance for an AI tool that listened to your voice and flagged depression and anxiety.

It raised $28M, published real studies, ran pilots, and then ran out of oxygen anyway.

What Was Kintsugi:

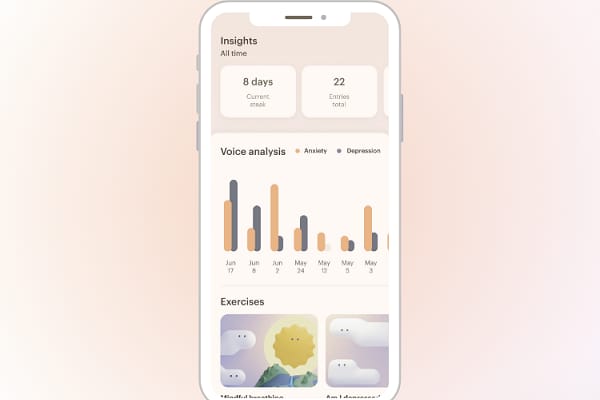

Kintsugi started in 2019 with a pretty crisp thesis: your voice carried measurable signals of mental state. Not the words, the acoustics: pitch, cadence, pauses, energy, that subtle drag you heard when someone wasn’t okay.

The idea: build an AI that “listened between the lines.” You gave a short voice sample, and the system tried to detect whether you were depressed or anxious. It didn’t focus on what you said. It focused on how you said it.

Of course, Kintsugi knew that an AI tool that flagged depression in a clinical setting was going to be treated like a medical device. That meant the FDA. And the FDA meant time, paperwork, studies, and a whole new set of costs that don’t show up in normal startup decks.

So the team did the thing investors always say they want. They generated evidence. They published peer-reviewed results showing their model could screen for depression from short voice samples with sensitivity and specificity in the low 70s.

Funding follows. They raise an $8M seed in 2021, then a $20M Series A in 2022, The pitch is easy to underwrite: huge prevalence, underserved detection, AI cost curve, clear ROI if you can route people to care earlier.

Then the timeline broke. FDA clearance became the critical path, and the critical path became the company. Founder Grace Chang said it took nearly four years waiting for clearance, with more than $16M spent on the process and another $1M to $4M needed to finish.

Meanwhile the cost structure didn’t care about any regulatory Gantt chart. Keeping AI engineers on $400K to $500K compensation packages turned into a burn-rate hostage situation.

They tried the obvious escape hatches. They explored fundraising, and they considered acquisition offers, and they still ended up choosing neither.

So they shut down and published the work. Kintsugi released its models and research as open-source, basically donating years of effort to the ecosystem.

The Numbers:

🚀 Founded: 2019

💰 Total raised: $28M (Seed $8M in 2021, Series A $20M in 2022)

🧾 FDA spend: $16M+ already, plus $1M to $4M more to finish

📚 Evidence: large-scale peer-reviewed study showing ~71% sensitivity and ~74% specificity for depression screening from short voice samples

Reasons for Failure:

Venture math didn’t fit regulatory latency: Kintsugi was a venture-backed company operating on a venture clock, but it picked a product path with a regulator’s clock. That mismatch was the real failure mode. When your go-to-market is gated by FDA clearance, “runway” becomes a policy decision you don’t control.

They tried to Pivot but it was too late: Kintsugi eventually launched a second product called Kintsugi Signal, a deepfake voice detector meant to tell if a voice was human or AI-generated. It was a clear attempt to find revenue outside the FDA bottleneck. Signal may have worked, but it lived in a different world: new buyers (security and fraud teams), new procurement paths, and zero free distribution from their healthcare relationships.

They anchored too hard on “clinical-grade” and left money on the table. Kintsugi chose the hardest version of the product early: a screening tool that looked and smelled like a medical device. That decision forced them into FDA timelines before they had a durable cash engine. They could have packaged earlier versions as stricter “wellness” tooling, employer mental health screening, coaching triage, or even analytics for existing behavioral health providers, then used that cash to fund the clinical path.

Why It Matters:

FDA-gated products need decade-capital, not Series A optimism.

Don’t wait for clearance to figure out cash flow, build a real revenue line early.

Trend

Perplexity Computer

Yesterday, Perplexity launched their own OpenClaw competitor.

It’s called Perplexity Computer and it’s designed to unify 19 different AI models into one coordinated system. According to Perplexity, the orchestrator can run for hours or even months.

Why it Matters

Orchestration becomes the moat. Perplexity is not claiming the best model. They are routing tasks across 19 models and stitching outputs into finished workflows.

Bundling across providers creates lock-in. If one system can fluidly use Claude-class coding, GPT-class reasoning, and Gemini-style search inside a single workflow, you stop caring which vendor powers which step.

Sandboxed agents are the enterprise path. Long-running automation scares companies when it touches local machines. A contained cloud environment is easier to approve, audit, and standardize.

What is it

Perplexity calls it a “general-purpose digital worker.”

Say you ask it to “build a competitive analysis site for AI coding tools.”

First, a research agent scans the web and pulls current data. Then a synthesis agent structures that into categories, pricing tiers, feature matrices. A writing agent drafts the copy in your specified tone. A coding agent generates a working web app. Another component tests and refines. The system then packages and delivers the final asset.

You did not have to micromanage those steps. The system delegated internally. That is the multi-agent layer.

Instead of one giant model pretending to reason, search, code, and remember all at once, the system splits the task into subtasks. Each subtask gets routed to a specialized component.

But the most important part is that Perplexity Computer can orchestrate across 19 models from different providers. So the deep research step might be assigned to Gemini. The coding step might go to Claude. And the summarization might be handled by GPT.

You are not picking models one by one. The system chooses the best AI for the task.

In theory, that means you get best-of-breed performance for each slice of work without manually stitching APIs together.

The other important piece is persistence.

This is not a session that dies when you close a tab. Computer can run for hours or months. You can tell it to monitor competitor pricing weekly, update a dashboard, and notify you when something changes. It operates on a schedule.

The Trend

To me, Perplexity Computer sits in the same philosophical lane as OpenClaw.

Both position themselves as digital workers that “get things done”. You hand over an objective, they decompose it, operate across tools, and return something finished. Multi-step reasoning. Workflow execution. Persistence over days, not minutes.

The main split is where they do the work:

OpenClaw is self-hosted and close to your environment. That proximity gives power and flexibility, but it also raises the stakes on security and deployment.

Perplexity keeps everything inside a managed cloud sandbox. This is much safer, more standardized and easier to pitch to an enterprise buyer.

In a sense, it is a more packaged, less chaotic version of OpenClaw. And that is probably what many users wanted after OpenClaw went viral a month ago. The ambition, without the operational anxiety.

I think we are starting to see a trend here. Call it openclawfication (bad name).

Over the next months, I expect more products to move in this direction, orchestration layers that sit on top of multiple models and tools. Systems built around delegation, execution and persistence.

Help Me Improve Failory

How useful did you find today’s newsletter?Your feedback helps me make future issues more relevant and valuable. |

That's all for today’s edition.

Cheers,

Nico